Linux学习

在Docker中运行一个Ubuntu桌面并安装Chrome浏览器

Docker云桌面(docker-headless)

Docker 部署Jupyter Notebook

知了 - 开源音乐服务器

NoVNC安装部署

Redis

怎样连接到Redis

MooseFS的简介、部署及应用

Moose部署日记

Moose-Fast部署

Moosefs快速启动

MooseSSD部署

新Moosefs部署详情:

Fedora36 Linux 手动设置IP

umount 提示 target is busy

Mysql慢日志

PVE相关

PVE迁移失败故障案例:ssh秘钥问题

qemu-img 转换镜像格式

PVE硬盘直通的几种方式

PVE节点退出集群

未找到 cloudinit 驱动器

glusterfs部署日记

Ceph部署记录

PVE下的新Ceph

查看网卡速率

Iscsi 部署安装

OTRS-znuny部署记录

CubeFs部署日记

CasaOS安装记录

VMware ESXi部署

ZFS内存高占用ARC

ZFS zpool学习文档

Linux操作问题,疑难杂症记录

乱码不显示中文

如何清除磁盘上残留的分区信息:

iperf3 网络性能测试工具

电视直播配置

pve1 添加磁盘

Debian 设置ipv6地址

AI知识库

ollama大语言模型工具搭建

AI平台汇总

how to install docker-compose

OpenLLM(python)

Powershell获取计算机名,ipv6地址等

本文档使用 MrDoc 发布

-

+

首页

Ceph部署记录

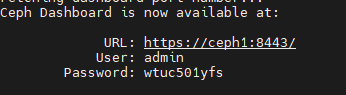

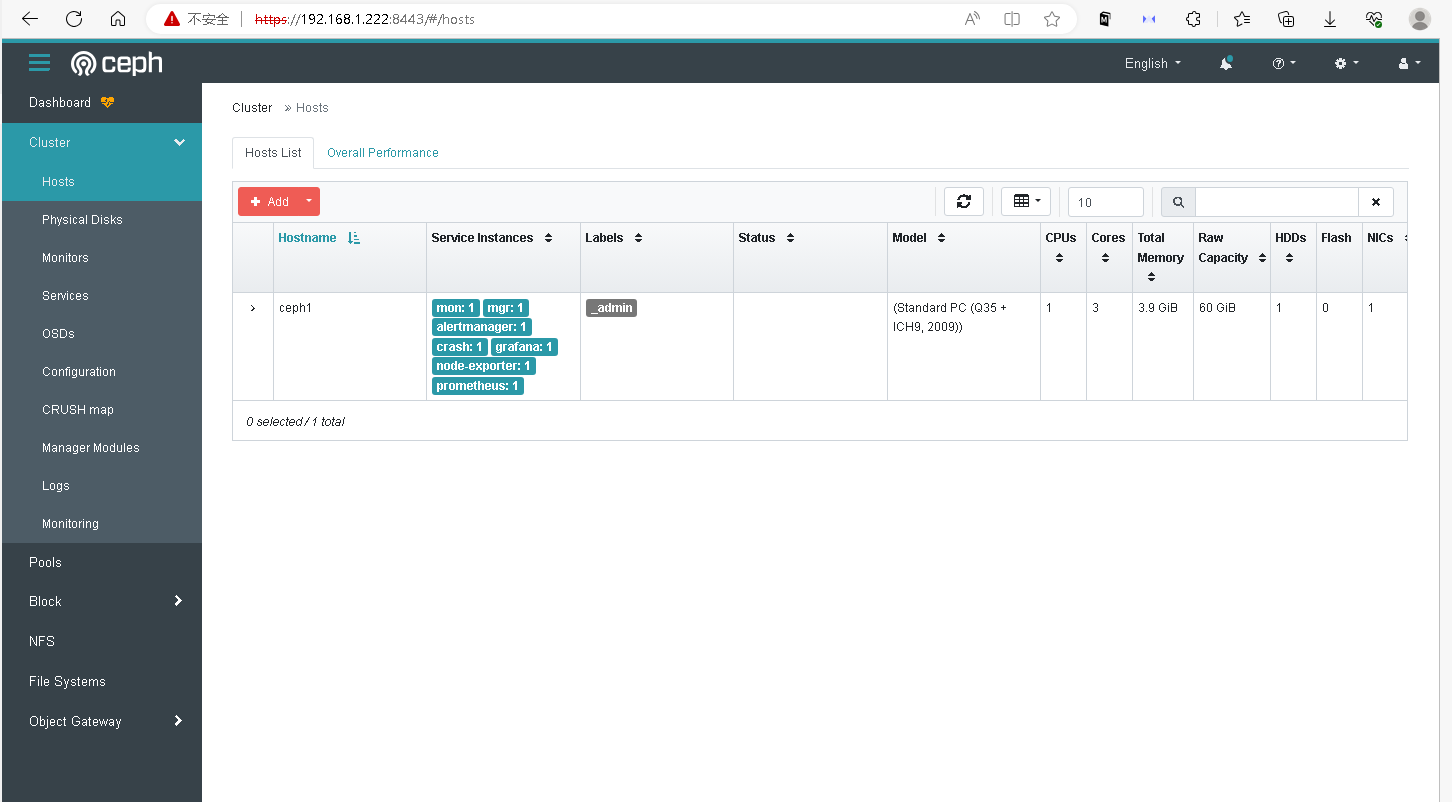

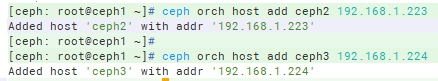

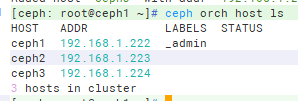

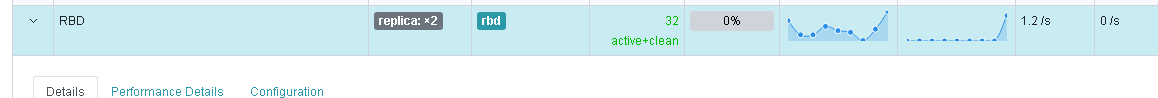

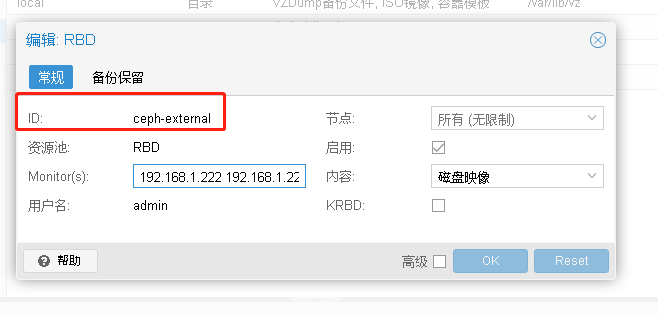

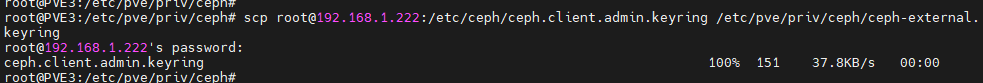

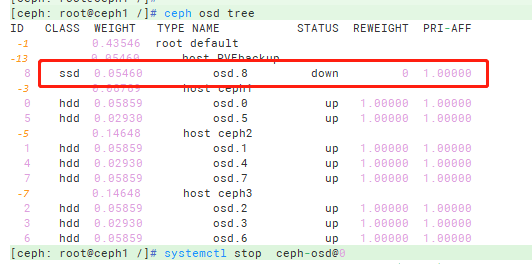

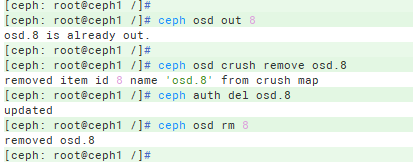

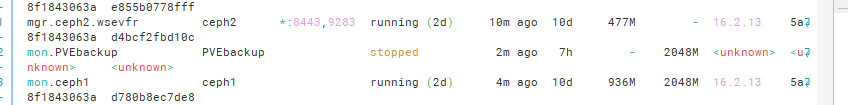

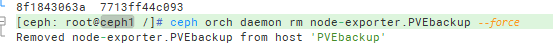

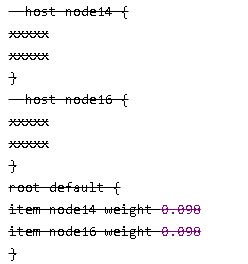

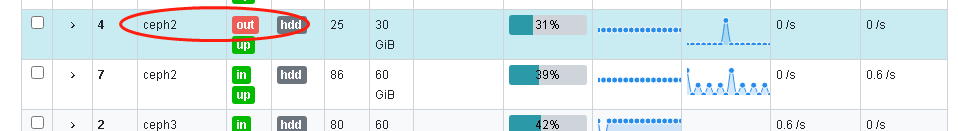

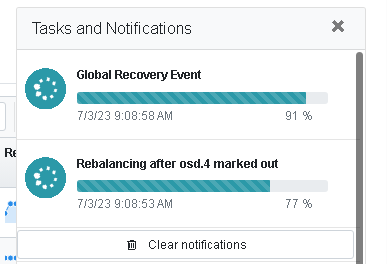

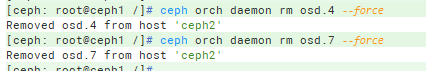

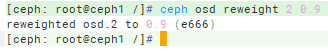

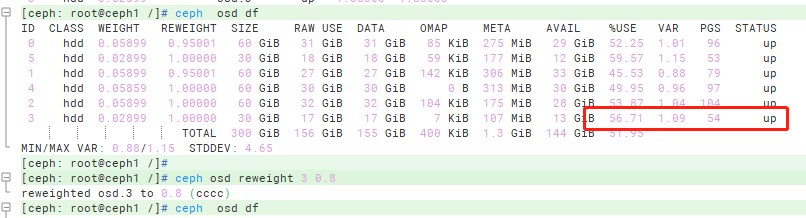

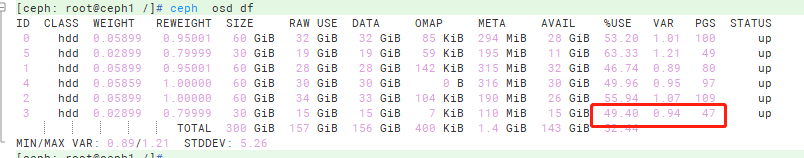

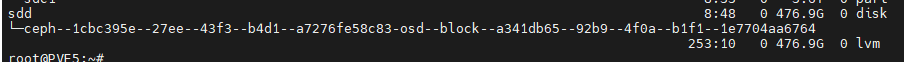

## 准备主机: ``` 192.168.1.222 ceph1 CephAdm MON Mgr OSD 192.168.1.223 ceph2 MON Mgr OSD 192.168.1.224 ceph3 MON OSD 新增主机: 192.168.1.7 PVEbackup OSD ``` ## 安装软件需求: ``` 依赖: Python 3 Systemd Podman or Docker for running containers Time synchronization (such as chrony or NTP) LVM2 for provisioning storage devices ``` 安装好 python3 和 docker ## 时间同步 ``` [root@ceph1 ~]# systemctl start chronyd [root@ceph1 ~]# systemctl enable chronyd [root@ceph1 ~]# systemctl status chronyd ``` ## 安装cephadm ``` In Fedora: dnf -y install cephadm ``` ## 下载配置cephadm: ``` CEPH_RELEASE=17.2.6 下载: curl --silent --remote-name --location https://download.ceph.com/rpm-${CEPH_RELEASE}/el9/noarch/cephadm 给权限: chmod 777 cephadm 安装adm: ./cephadm install ``` ## 引导新集群: ``` 运行 ceph bootstrap: cephadm bootstrap --mon-ip 192.168.1.222 ``` 有这个提示表示 启动成功:  ## 访问 ``` https://192.168.1.222:8443/ 更改密码: admin symbian1 ``` 集群启动成功:  ## 安装其他工具: ``` cephadm shell cephadm install ceph-common 安装好后查看版本: ceph -v ceph status ``` ## 添加host主机: ``` 1. 安装集群公共钥匙: ceph cephadm get-pub-key > ~/ceph.pub ssh-copy-id -f -i ~/ceph.pub root@ceph2 ssh-copy-id -f -i ~/ceph.pub root@ceph3 2. 加入新host: ceph orch host add ceph2 192.168.1.223 ceph orch host add ceph3 192.168.1.224 ```  查看主机: ``` ceph orch host ls ```  ## 添加磁盘osd: ``` ceph orch daemon add osd ceph3:/dev/sdc ceph orch daemon add osd ceph2:/dev/sdc ceph orch daemon add osd ceph1:/dev/sdc ``` ## 挂载cephfs ``` mount -t ceph 192.168.1.222:6789,192.168.1.223:6789,192.168.1.224:6789:/ /cephfs -o name=cephfs,secret=AQCkSpJke6kXGRAAO/SijwEsciM/lgcgLuIgkA== ``` ## 创建RBD给PVE使用 1. 在页面上创建 Pool  2. 将pool RBD 添加应用 rdb ``` ceph osd pool application enable RBD rbd ``` 3. 在PVE页面上添加RBD  4. 在pve3上给ceph-external 复制公钥到pve ``` root@PVE3:/mnt/pve# mkdir /etc/pve/priv/ceph root@PVE3:/etc/pve/priv/ceph# scp root@192.168.1.222:/etc/ceph/ceph.client.admin.keyring /etc/pve/priv/ceph/ceph-external.keyring root@192.168.1.222's password: ceph.client.admin.keyring 100% 151 37.8KB/s 00:00 ``` 5.刷新pve页面,发现RBD已正常   ## 移除失败 OSD记录: ``` 查看OSD: ```  ``` 下线OSD: ceph osd out 8 将osd.0踢出集群,执行: ceph osd crush remove osd.8 ceph auth del osd.8 ceph osd rm 8 ```  列出所有进程,看哪些失败: ``` ceph orch ps ```  移除失败进程,让页面健康恢复正常 ``` ceph orch daemon rm node-exporter.PVEbackup --force ```  强制删除失败的docker daemon ``` ceph orch daemon rm osd.8 --force ``` 彻底删除留在 PVEbackup上的ceph: ``` root@PVEbackup:~# rm -rf /etc/ceph/* root@PVEbackup:~# rm -rf /var/lib/ceph/*/* root@PVEbackup:~# rm -rf /var/run/ceph/* ``` ## 处理crushmap的脏数据 当删除host后, crushmap上会遗留已删除的host,需要手动处理 crushmap的一些架构图,是不会动态修改的,所以需要手工的修改crushmap,修改进行如下的操作 ``` ceph osd getcrushmap -o old.map //导出crushmap crushtool -d old.map -o old.txt //将crushmap转成文本文件,方便vim修改 cp old.txt new.txt //做好备份工作 vim new.txt ```  ``` 把以上的内容都删除掉,然后重新编译为二进制文件,最后将二进制map应用到当前map中 curshtool -c new.txt -o new.map ceph osd setcrushmap -i new.map 此时ceph会重新收敛,等待收敛完毕后,ceph可正常使用 ``` ## 删除无用的osd 例: 删除 ceph2 osd.4 , osd.7 1. 降低权重到0 ``` [ceph: root@ceph1 /]# ceph osd reweight 4 0 reweighted osd.4 to 0 (0) [ceph: root@ceph1 /]# ceph osd reweight 7 0 reweighted osd.7 to 0 (0) ``` 集群开始重新调整数据:   ``` [ceph: root@ceph1 /]# ceph osd out 4 osd.4 is already out. [ceph: root@ceph1 /]# ceph osd out 7 osd.7 is already out. [ceph: root@ceph1 /]# ceph osd crush remove osd.4 removed item id 4 name 'osd.4' from crush map [ceph: root@ceph1 /]# ceph osd crush remove osd.7 removed item id 7 name 'osd.7' from crush map 删除OSD: ceph osd down osd.4 ; ceph osd rm osd.4 ceph osd down osd.7 ; ceph osd rm osd.7 删除 docker daemon: ceph orch daemon rm osd.4 --force ceph orch daemon rm osd.7 --force ```  ## OSD数据平衡 ``` [ceph: root@ceph1 /]# ceph osd reweight 2 0.9 reweighted osd.2 to 0.9 (e666) ```    ## Ceph 报错 Recent Crash 的解决方案: ceph health报错 health: HEALTH_WARN 1 daemons have recently crashed ``` [ceph: root@ceph1 /]# ceph crash ls ID ENTITY NEW 2023-08-24T07:40:36.769198Z_861bc0a0-74ce-419f-beea-0ff4db964a3f mon.ceph1 * [ceph: root@ceph1 /]# [ceph: root@ceph1 /]# ceph crash info 2023-08-24T07:40:36.769198Z_861bc0a0-74ce-419f-beea-0ff4db964a3f { "assert_condition": "abort", "assert_file": "/home/jenkins-build/build/workspace/ceph-build/ARCH/x86_64/AVAILABLE_ARCH/x86_64/AVAILABLE_DIST/centos8/DIST/centos8/MACHINE_SIZE/gigantic/release/16.2.13/rpm/el8/BUILD/ceph-16.2.13/src/mon/MonitorDBStore.h", "assert_func": "int MonitorDBStore::apply_transaction(MonitorDBStore::TransactionRef)", "assert_line": 355, "assert_msg": "/home/jenkins-build/build/workspace/ceph-build/ARCH/x86_64/AVAILABLE_ARCH/x86_64/AVAILABLE_DIST/centos8/DIST/centos8/MACHINE_SIZE/gigantic/release/16.2.13/rpm/el8/BUILD/ceph-16.2.13/src/mon/MonitorDBStore.h: In function 'int MonitorDBStore::apply_transaction(MonitorDBStore::TransactionRef)' thread 7f77a90ca700 time 2023-08-24T07:40:36.691365+0000\n/home/jenkins-build/build/workspace/ceph-build/ARCH/x86_64/AVAILABLE_ARCH/x86_64/AVAILABLE_DIST/centos8/DIST/centos8/MACHINE_SIZE/gigantic/release/16.2.13/rpm/el8/BUILD/ceph-16.2.13/src/mon/MonitorDBStore.h: 355: ceph_abort_msg(\"failed to write to db\")\n", "assert_thread_name": "ms_dispatch", "backtrace": [ "/lib64/libpthread.so.0(+0x12cf0) [0x7f77b4964cf0]", "gsignal()", "abort()", "(ceph::__ceph_abort(char const*, int, char const*, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&)+0x1b6) [0x7f77b6c2ecb5]", "(MonitorDBStore::apply_transaction(std::shared_ptr<MonitorDBStore::Transaction>)+0xb4c) [0x55a9706a868c]", "(Elector::persist_epoch(unsigned int)+0x1ac) [0x55a970773bcc]", "(ElectionLogic::bump_epoch(unsigned int)+0x56) [0x55a970778ed6]", "(ElectionLogic::propose_classic_prefix(int, unsigned int)+0xaf) [0x55a97077adff]", "(ElectionLogic::propose_classic_handler(int, unsigned int)+0x2a) [0x55a97077c98a]", "(ElectionLogic::receive_propose(int, unsigned int, ConnectionTracker const*)+0x7d) [0x55a97077f62d]", "(Elector::handle_propose(boost::intrusive_ptr<MonOpRequest>)+0x6fe) [0x55a97076fafe]", "(Elector::dispatch(boost::intrusive_ptr<MonOpRequest>)+0x1066) [0x55a9707750d6]", "(Monitor::dispatch_op(boost::intrusive_ptr<MonOpRequest>)+0x112e) [0x55a9706ee69e]", "(Monitor::_ms_dispatch(Message*)+0x670) [0x55a9706ef320]", "(Dispatcher::ms_dispatch2(boost::intrusive_ptr<Message> const&)+0x5c) [0x55a97071e4cc]", "(DispatchQueue::entry()+0x126a) [0x7f77b6e756ba]", "(DispatchQueue::DispatchThread::entry()+0x11) [0x7f77b6f28d21]", "/lib64/libpthread.so.0(+0x81ca) [0x7f77b495a1ca]", "clone()" ], "ceph_version": "16.2.13", "crash_id": "2023-08-24T07:40:36.769198Z_861bc0a0-74ce-419f-beea-0ff4db964a3f", "entity_name": "mon.ceph1", "os_id": "centos", "os_name": "CentOS Stream", "os_version": "8", "os_version_id": "8", "process_name": "ceph-mon", "stack_sig": "03cf99dbd7c1e6bc9620d22a5ee47d4bdc3ba23dc800f80a53b457756763c9cf", "timestamp": "2023-08-24T07:40:36.769198Z", "utsname_hostname": "ceph1", "utsname_machine": "x86_64", "utsname_release": "5.17.5-300.fc36.x86_64", "utsname_sysname": "Linux", "utsname_version": "#1 SMP PREEMPT Thu Apr 28 15:51:30 UTC 2022" } [ceph: root@ceph1 /]# ceph crash archive 2023-08-24T07:40:36.769198Z_861bc0a0-74ce-419f-beea-0ff4db964a3f ``` ## 清除磁盘上的 ceph lvm  ``` root@PVE5:~# dmsetup remove ceph--1cbc395e--27ee--43f3--b4d1--a7276fe58c83-osd--block--a341db65--92b9--4f0a--b1f1--1e7704aa6764 ``` ## 参考文档 ``` https://docs.ceph.com/en/latest/cephadm/install/#requirements https://blog.csdn.net/qq_50255609/article/details/127399954 删除osd: https://www.cnblogs.com/wshenjin/p/11550176.html ```

dz

2023年8月26日 10:16

转发文档

收藏文档

上一篇

下一篇

手机扫码

复制链接

手机扫一扫转发分享

复制链接

Markdown文件

分享

链接

类型

密码

更新密码